Overview

Nowadays there are tons of different websites and web applications that deliver services and content to the end user. Delivery infrastructures of those applications make a huge difference in the performance and reliability that the users experience and, ultimately, in the success or failure of your business or organization.

Web page speed is very important. For years, analysts have been monitoring user behavior, and have come up with what’s colloquially known as the “N second rule”. This is how long an average user is prepared to wait for a page to load and render before he or she gets bored and impatient and moves to a different website, to a competitor:

N second rules

- 10-second rule (Jakob Nielsen, March 1997)

- 8-second rule (Zona Research, June 2001)

- 4-second rule (Jupiter Research, June 2006)

- 3-second rule (PhocusWright, March 2010)

With the technology growth, we are getting more and more new strategies and methods to make content delivery as fast as possible. Nowadays users want to navigate from one page to another as quickly as they turn the page on a book. If we fail to meet expected levels of performance, then there can be significant impacts on the success of our website or web application.

This article covers caching strategies widely used in well-known open source caching services like Redis, Memcached as well as web servers like Nginx or Apache. We’ll not cover all the implementation details, but will try to explain the main idea of the caching, so you can understand what is that for, how it works, what are the advantages and disadvantages.

Basic principles of caching

A content cache implementation sits between the client request and the origin server and saves the content it sees. If the client requests the content that the cache has stored it returns the content directly, without contacting the origin server.

It is important to note that the cache engine implementation always runs on memory storage, not on disk storage. That’s why it is considered faster than retrieving the data from a disk-based storage (e.g relational databases or KV databases).

For more information check this link.

In case of websites static assets like (images, audio files, video files, fonts etc..) easy to cache. In the case of web applications which serve dynamic and personalized content, things become more complicated.

We should make sure that we deliver up to date information to the end user. Further, we’ll discuss how to achieve this to reduce latency, increase performance and reliability of our websites and web applications.

Caching Strategies

The strategy that we want to use for our cache implementation highly depends on what data we want to serve and what patterns we use to access that data. There are two well-known strategies that I would like to talk about in this article:

- Lazy loading

- Write through

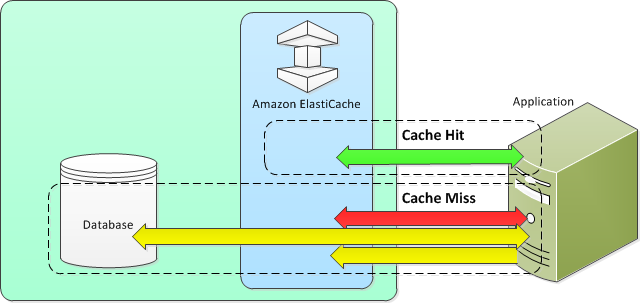

How lazy loading strategy works:

The main idea behind the lazy loading strategy is to cache data whenever the user requests it, no need to cache the data which have not been requested. Means when the user requests e.g the list of last posts, the request will go through the cache engine first, which will check whenever it has something to respond with or not, if yes it sends the response back if not then it will be redirected to the database, retrieve the data, save the data to cache and return it to the user, so all the further requests for the list of posts will be responded from the cache. So there are two scenarios:

Cache HIT – When data is in the cache and isn’t expired

- Client requests data from the cache.

- Cache returns the data to the application.

Cache MISS – When the data isn’t in the cache or it is expired

- Client requests data from the cache.

- Cache doesn’t have the requested data, so it returns a null.

- Client requests and receives the data from the database.

- Application backend updates the cache with the new data.

Advantages of a lazy loading strategy

- We cache only what we need, the data which is not requested by the client will never end up in a cache storage because basically, it requested.

- In case of hardware failure, the caching will continue to function on a new one, each cache miss results in a query of the database will be added to the cache so that subsequent requests are retrieved from the cache.

Disadvantages of a lazy loading strategy

- There is a cache miss penalty. Each cache miss results in 3 trips, which can increase the latency

- The initial request for data from the cache

- Query of the database for the data

- Writing the data to the cache

- Stale data, If data is only written to the cache when there is a cache miss, data in the cache can become stale since there are no updates to the cache when data is changed in the database

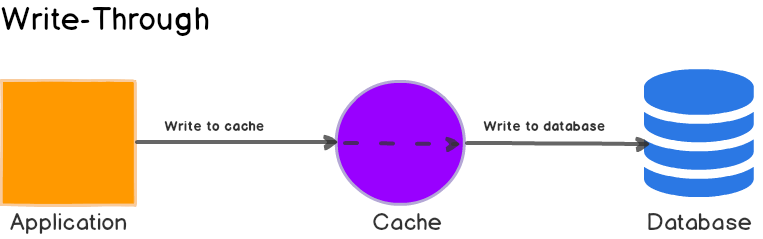

How the ”write through strategy” works:

The main idea behind the ‘’write through strategy’’ is to cache data whenever the database updates or creates records. Mens when there is any operation that updates or adds new record to the database we take that and put to the cache storage.

Advantages of Write Through Strategy

- Data in the cache will never be stale because we always update it

- Write penalty vs. Reading penalty, every write/update includes two operations

- Write to the cache storage

- Write to the database

Disadvantages of Write Through Strategy

- In case of spinning up a new node, due to the node failure or scale out, there will be a missing data in cache storage, until that will be updated in the database

- Cache churn, since the most data will never read, there will be a lot of data that will never be read, this is a waste of memory

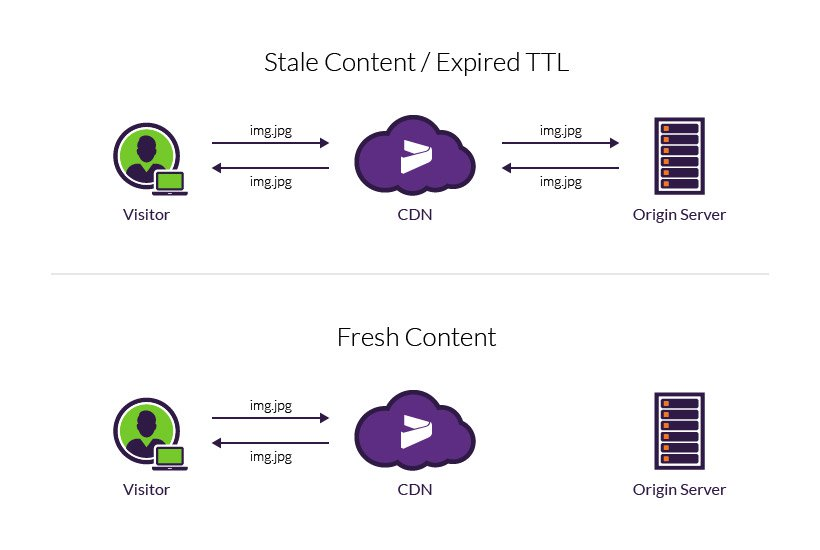

TTL (Time To Live)

TTL is an integer value that specifies the number of seconds or milliseconds (based on implementation) until the key expires. When an application attempts to read an expired key from a cache storage, it is treated as though the key is not found, meaning that the database is queried for the key and the cache is updated.

This does not guarantee that a value is not stale, but it keeps data from getting too stale and requires that values in the cache are occasionally refreshed from the database.

How TTL can help to cover disadvantages of both strategies described above

Well, Lazy loading allows for stale data, but won’t fail with empty nodes. Write through ensures that data is always fresh, but may fail with empty nodes and may populate the cache with superfluous data. By adding a time to live (TTL) value to each write, we are able to enjoy the advantages of each strategy and largely avoid cluttering up the cache with superfluous data.

- Topics:

- DevOps